Masked Machines

The technology behind a rising wave of cyberattacks that puts entire nations at risk

Infographic by Daisy Fregoso

In November 2025, the AI company Anthropic reported a cyber-espionage operation that targeted over thirty entities. Given the dozens of data leaks reported over the past few decades, what made this attack so notable? This operation, unlike others, was implemented almost entirely with Claude-generated code, a process coined as “vibe hacking.” Today, human intelligence may not prove powerful enough to protect our sensitive data from increasingly sophisticated AI machines.

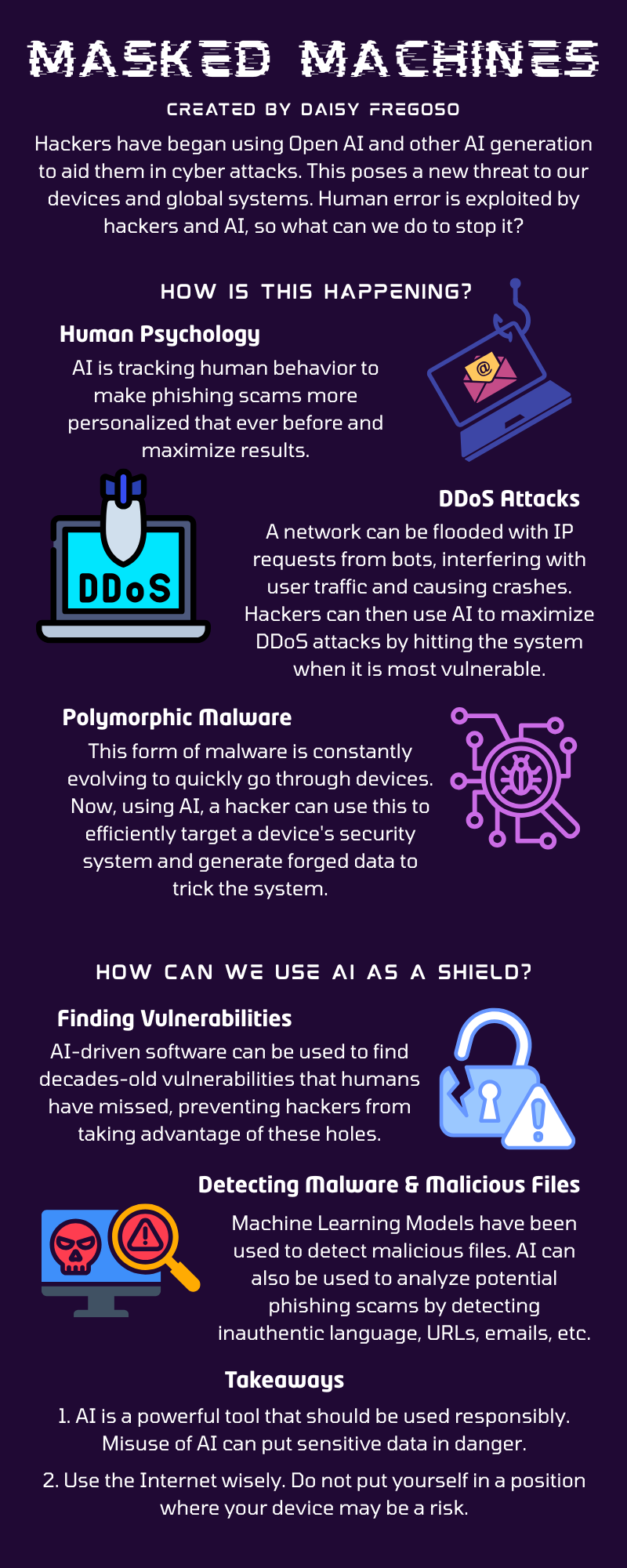

There are numerous AI-based approaches that hackers may employ to disrupt security measures and gain access to private data. One approach involves human psychology; by analyzing an individual’s online behavior, interests, and preferences, AI agents can personalize phishing attacks to maximize the probability of their victim clicking a malicious link or sharing sensitive information. With AI analyzing user data in real time, phishing attacks may become increasingly inconspicuous. Human error is widely considered to be the top cybersecurity risk, and AI agents have made it easier to exploit us.

AI can also improve the power of DDoS (Distributed Denial of Service) attacks, which can disrupt entire networks. DDoS attacks are executed when numerous bots are programmed to send an overwhelming number of requests to an IP address, causing a flood of user traffic on a network. While some DDoS attacks can simply cause a network/system to run slower, a strong-enough attack can crash the entire system. Given the large scale of devices connected to the internet, DDoS attacks are difficult to detect. AI can exacerbate these attacks by analyzing its target system’s traffic patterns and scheduling attacks during its most vulnerable times.

Polymorphic malware may also be generated by AI. Polymorphic malware, a form of software, can spread through devices at a quick rate by constantly changing its appearance, a method that allows it to evade security systems. Just like how viruses rapidly mutate and evolve, polymorphic malware continually takes on new forms, generating up to a million unique signatures per day while maintaining its malicious functions. While polymorphic malware already poses a threat to computer systems, AI can further bolster the efficacy of these programs. AI can analyze the target’s security defenses and background, help malware adapt to its target’s environment in real time, and generate forged files, images, and other content to deceive users.

It is estimated that a single data breach has an annual $400 billion impact on the global economy, and that the United States loses up to $8.19 million. But when the enemy is an easily accessible, seemingly-omniscient machine, how could you possibly implement safeguards that detect and prevent AI-driven attacks? The answer is rather simple: just as hackers use AI as a weapon, you use AI as a shield.

In a recent 2026 report, Anthropic announced Project Glasswing, an initiative aimed at developing stronger, AI-driven software to counter rising cybersecurity risks. According to Anthropic, their newest AI model, Mythos Preview, has already detected decades-old vulnerabilities in OpenBSD, a popular operating system, and FFmpeg, a software that encodes video files. It has even discovered vulnerabilities in the Linux kernel, which is widely used to run global servers.

Machine learning models have also shown effectiveness in detecting malware and malicious files. These models inspect code features, construct decision trees to model consequences, and analyze file signatures and program runtimes to classify a piece of software as malware. To evade phishing attempts, AI is used to inspect emails and other phishing attempts to detect linguistic signs of inauthenticity. ML can also analyze features of malicious URLs and webpage structures that a user may mistakenly click on.

Although the rise of increasingly-sophisticated AI-driven cyberattacks raises concerns for the safety of our networks and data, efforts are in place to counteract this new wave of crime. With the improvement of AI tools to detect and prevent cyberattacks, we can secure the systems that safeguard our data and livelihoods.

These articles are not intended to serve as medical advice. If you have specific medical concerns, please reach out to your provider.